2023数据采集与融合技术实践作业二

作业①:

要求:在中国气象网(http://www.weather.com.cn)给定城市集的 7日天气预报,并保存在数据库。

输出信息:

| 序号 | 地区 | 日期 | 天气信息 | 温度 |

|---|---|---|---|---|

| 1 | 北京 | 7日(今天) | 晴间多云,北部山区有阵雨或雷阵雨转晴转多云 | 31℃/17℃ |

| 2 | 北京 | 8日(明天) | 多云转晴,北部地区有分散阵雨或雷阵雨转晴 | 34℃/20℃ |

| 3 | 北京 | 9日(后台) | 晴转多云 | 36℃/22℃ |

| 4 | 北京 | 10日(周六) | 阴转阵雨 | 30℃/19℃ |

| 5 | 北京 | 11日(周日) | 阵雨 | 27℃/18℃ |

| 6...... |

Gitee 文件夹链接

1).代码:

from bs4 import BeautifulSoup

from bs4 import UnicodeDammit

import urllib.request

import sqlite3

class WeatherDB:

def openDB(self):

self.con=sqlite3.connect("weathers.db")

self.cursor=self.con.cursor()

try:

self.cursor.execute("create table weathers (wCity varchar(16),wDate varchar(16),wWeather varchar(64),wTemp varchar(32),constraint pk_weather primary key (wCity,wDate))")

except:

self.cursor.execute("delete from weathers")

def closeDB(self):

self.con.commit()

self.con.close()

def insert(self,city,date,weather,temp):

try:

self.cursor.execute("insert into weathers (wCity,wDate,wWeather,wTemp) values (?,?,?,?)" ,(city,date,weather,temp))

except Exception as err:

print(err)

def show(self):

self.cursor.execute("select * from weathers")

rows=self.cursor.fetchall()

print("%-16s%-16s%-32s%-16s" % ("city","date","weather","temp"))

for row in rows:

print("%-16s%-16s%-32s%-16s" % (row[0],row[1],row[2],row[3]))

class WeatherForecast:

def __init__(self):

self.headers = {

"User-Agent": "Mozilla/5.0 (Windows; U; Windows NT 6.0 x64; en-US; rv:1.9pre) Gecko/2008072421 Minefield/3.0.2pre"}

self.cityCode={"北京":"101010100","上海":"101020100","广州":"101280101","深圳":"101280601"}

def forecastCity(self,city):

if city not in self.cityCode.keys():

print(city+" code cannot be found")

return

url="http://www.weather.com.cn/weather/"+self.cityCode[city]+".shtml"

try:

req=urllib.request.Request(url,headers=self.headers)

data=urllib.request.urlopen(req)

data=data.read()

dammit=UnicodeDammit(data,["utf-8","gbk"])

data=dammit.unicode_markup

soup=BeautifulSoup(data,"lxml")

lis=soup.select("ul[class='t clearfix'] li")

for li in lis:

try:

date=li.select('h1')[0].text

weather=li.select('p[class="wea"]')[0].text

temp=li.select('p[class="tem"] span')[0].text+"/"+li.select('p[class="tem"] i')[0].text

print(city,date,weather,temp)

self.db.insert(city,date,weather,temp)

except Exception as err:

print(err)

except Exception as err:

print(err)

def process(self,cities):

self.db=WeatherDB()

self.db.openDB()

for city in cities:

self.forecastCity(city)

#self.db.show()

self.db.closeDB()

ws=WeatherForecast()

ws.process(["北京","上海","广州","深圳"])

print("completed")

结果:

2).心得体会:

该次实验是对天气网爬虫的复现作业,初步学习如何创建数据库、在数据库中创建表,对表进行插入操作与打印出表的内容。

作业②:

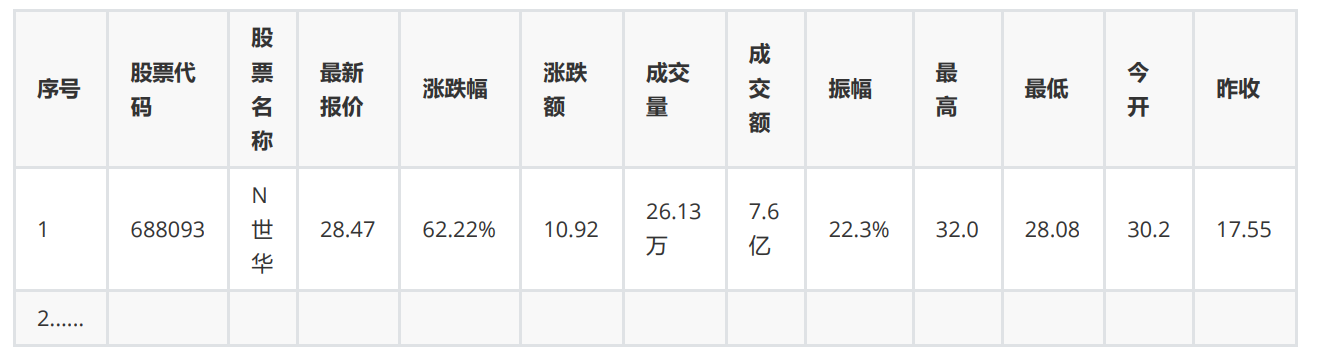

要求:用 requests 和 BeautifulSoup 库方法定向爬取股票相关信息,并存储在数据库中。

候选网站:东方财富网:https://www.eastmoney.com/

新浪股票:http://finance.sina.com.cn/stock/

技巧:在谷歌浏览器中进入 F12 调试模式进行抓包,查找股票列表加载使用的 url,并分析 api 返回的值,并根据所要求的参数可适当更改api 的请求参数。根据 URL 可观察请求的参数 f1、f2 可获取不同的数值,根据情况可删减请求的参数。参考链接:https://zhuanlan.zhihu.com/p/50099084

输出信息:

Gitee 文件夹链接

抓包过程:

1).代码:

import requests

import json

import sqlite3

# 创建表

def create_database():

conn = sqlite3.connect('stock1.db')

cursor = conn.cursor()

cursor.execute('''

CREATE TABLE IF NOT EXISTS stocks (

id INTEGER PRIMARY KEY AUTOINCREMENT,

code TEXT,

name TEXT,

latest_price REAL,

change_percent REAL,

change_amount REAL,

volume INTEGER,

turnover REAL,

amplitude REAL,

highest REAL,

lowest REAL,

open_price REAL,

close_price REAL

)

''')

conn.commit()

conn.close()

# 将数据插入表中

def save_stock_data_to_database(stock_list):

conn = sqlite3.connect('stock1.db')

cursor = conn.cursor()

for stock in stock_list:

f12 = stock['f12']

f14 = stock['f14']

f2 = stock['f2']

f3 = stock['f3']

f4 = stock['f4']

f5 = stock['f5']

f6 = stock['f6']

f7 = stock['f7']

f15 = stock['f15']

f16 = stock['f16']

f17 = stock['f17']

f18 = stock['f18']

cursor.execute('''

INSERT INTO stocks (

code, name, latest_price, change_percent, change_amount,

volume, turnover, amplitude, highest, lowest, open_price, close_price

) VALUES (?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?)

''', (f12, f14, f2, f3, f4, f5, f6, f7, f15, f16, f17, f18))

conn.commit()

conn.close()

# 将数据输出

def print_database_contents():

conn = sqlite3.connect('stock1.db')

cursor = conn.cursor()

cursor.execute('SELECT * FROM stocks')

print("{:<5} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7}".format(

"序号", "股票代码", "股票名称", "最新报价", "涨跌幅", "跌涨额", "成交量", "成交额", "振幅", "最高", "最低", "今开", "昨收"))

for row in cursor.fetchall():

print("{:<5} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7} {:<7}".format(

row[0], row[1], row[2], row[3], row[4], row[5], row[6], row[7], row[8], row[9], row[10], row[11], row[12]))

conn.close()

def scrape_and_store_data(base_url1,base_url2, total_pages):

create_database()

for page_number in range(1, total_pages + 1):

page_url = f"{base_url1}&pn={page_number}{base_url2}"

response = requests.get(page_url)

if response.status_code == 200:

data = response.text

json_data = json.loads(data[data.find('{'):data.rfind('}') + 1])

stock_list = json_data['data']['diff']

save_stock_data_to_database(stock_list)

else:

print(f"无法从第 {page_number} 页获取数据。")

def main():

base_url1 = 'http://95.push2.eastmoney.com/api/qt/clist/get?cb=jQuery112404577990037157569_1696660645140'

base_url2 = '&pz=20&po=1&np=1&ut=bd1d9ddb04089700cf9c27f6f7426281&fltt=2&invt=2&wbp2u=|0|0|0|web&fid=f3&fs=m:0+t:6,m:0+t:80,m:1+t:2,m:1+t:23,m:0+t:81+s:2048&fields=f1,f2,f3,f4,f5,f6,f7,f8,f9,f10,f12,f13,f14,f15,f16,f17,f18,f20,f21,f23,f24,f25,f22,f11,f62,f128,f136,f115,f152&_=1696660645141'

total_pages = 3 # 根据需要更改要爬取的页数

scrape_and_store_data(base_url1, base_url2,total_pages)

print_database_contents()

if __name__ == "__main__":

main()

结果:(以三页为例)

2).心得体会:

本次实验是尝试抓包且通过json提取数据的,需要注意的是,如果没有对数据库中建立的表格进行清除,可以手动清除库后再次运行或是更换数据库名字,否则就会出现多次数据内容叠加在一个表格的情况。

此次实验对抓包的概念有了初步的了解与体验,也在不断尝试中逐渐熟悉这个过程。

作业③:

要求:爬取中国大学2021主榜 (https://www.shanghairanking.cn/rankings/bcur/2021) 所有院校信息,并存储在数据库中,同时将浏览器F12调试分析的过程录制Gif加入至博客中。

技巧:分析该网站的发包情况,分析获取数据的api

输出信息:

| 排名 | 学校 | 省市 | 类型 | 总分 |

|---|---|---|---|---|

| 1 | 清华大学 | 北京 | 综合 | 969.2 |

Gitee****文件夹链接

同样先进行抓包:

调试分析过程:

但是与上一次实验不同的是,这次的js文件点进去中文是乱码,可以通过

r.encoding = r.apparent_encoding

进行转码

1).代码:

import requests

import re

import sqlite3

def get_university_data(url):

r = requests.get(url)

r.raise_for_status()

data = r.text

return data

#创建表

def create_database():

conn = sqlite3.connect('university_data.db')

cursor = conn.cursor()

cursor.execute('''

CREATE TABLE IF NOT EXISTS universities (

id INTEGER PRIMARY KEY AUTOINCREMENT,

ranking INTEGER,

name_cn TEXT,

province TEXT,

category TEXT,

total_score REAL

)

''')

conn.commit()

conn.close()

#将数据插入表

def save_university_data_to_database(data):

conn = sqlite3.connect('university_data.db')

cursor = conn.cursor()

rname = 'univNameCn:"(.*?)"'

rprovince = 'province:(.*?),'

rcategory = 'univCategory:(.*?),'

rscore = 'score:(.*?),'

namelist = re.findall(rname, data, re.S | re.M)

provincelist = re.findall(rprovince, data, re.S | re.M)

categorylist = re.findall(rcategory, data, re.S | re.M)

scorelist = re.findall(rscore, data, re.S | re.M)

# 确保所有列表的长度一致

min_length = min(len(namelist), len(scorelist), len(provincelist), len(categorylist))

for i in range(min_length):

cursor.execute('INSERT INTO universities (ranking, name_cn, province, category, total_score) VALUES (?, ?, ?, ?, ?)',

(i+1, namelist[i], provincelist[i], categorylist[i], scorelist[i]))

conn.commit()

conn.close()

#将结果输出

def print_database_contents():

conn = sqlite3.connect('university_data.db')

cursor = conn.cursor()

cursor.execute('SELECT * FROM universities')

print("{:<10} {:<10} {:<10} {:<10} {:<10}".format("排名", "学校", "省市", "类型", "总分"))

for row in cursor.fetchall():

print("{:<10} {:<10} {:<10} {:<10} {:<10}".format(row[1], row[2], row[3], row[4], row[5]))

conn.close()

if __name__ == "__main__":

page_url = 'https://www.shanghairanking.cn/_nuxt/static/1695811954/rankings/bcur/2021/payload.js'

university_data = get_university_data(page_url)

create_database()

save_university_data_to_database(university_data)

print_database_contents()

结果:

2).心得体会:

同样为抓包与数据库的实验,不同的是,本题需要对url内容进行转码以及使用正则表达式提取所需信息,通过此次实验,更加深刻地理解数据库的相关应用以及抓包过程更加熟悉。