一、K8S的组件构成

1.1 Control Plane

控制平面的组件对集群做出全局决策(比如调度),以及检测和响应集群事件。

调度节点可以多节点部署,由于不是主要工作节点,性能开销小,可以单独部署在一台机器上即可

-

Kubernetes API Server-(kube-apiserver)

提供HTTP RESTful API接口的主要服务,对所有资源进行操作的唯一入口,也是集群控制的入口进程。(通知别的组件干活)

-

Kubernetes Controller Manager(kube-controller-manager)

所有资源对象的自动化控制中心,有以下控制组件组成:

- Node controller:节点挂掉或者故障时,负责通知和响应

- Job controller: 监控 job 的变化,然后创建相应的 pod运行其任务直到完成

- Endpoints controller:endpoints 资源对象的控制器,其通过对service、pod资源的监听,当这2种资源发生变化时会触发 endpoints controller 对相应的endpoints资源进行协调操作,从而完成endpoints对象的新建、更新、删除等操作。

- Service Account & Token controllers:为新命名空间创建默认账户和API访问令牌

-

Kubernetes Scheduler-(kube-scheduler)

分配工作节点。如果用户创建了一个服务,新建了一个Pod,调度器会为其分配一个工作节点运行

-

etcd

一个高可用分布式的一个存储系统。API Server 中所需要的这些原信息都被放置在 etcd 中,通过 etcd 保证整个 Kubernetes 的 Control Plane组件的分布式高可用性。

仅API Server才具备读写权限,其他组件必须间接通过API Server 的接口才能读写数据。

-

Cloud Controller Manager

云控制器管理器是指嵌入特定云的控制逻辑。分割其他云平台交互和当前集群的交互。(学习环境不需要)

1.2 Node

节点组件在每个节点上运行,维护运行的POD并提供Kubernetes运行时环境。

一般习惯称之为工作节点。

-

kubelet

Pod对应容器的创建、启停等任务,同时与Control Plane密切协作,实现集群管理的基本功能。接受调度,上报节点信息。

-

kube-proxy

一个网络代理,负责 Node 在 k8s的网络通讯、以及对外部网络流量的负载均衡。

-

容器运行时-Container Runtime

负责本机的容器创建和管理,比如安装docker提供容器的运行环境等。

二、 k8s基本工作流程

控制节点

-

将应用程序清单交给 kubernetes api,API service 将应用定义对象写入etcd

-

Controller 通过etcd 注意到 新创建的对象任务,然后创建一个或多个应用实例

-

Scheduler 为每个实例分配一个节点

工作节点

-

kubelet 注意到一个实例分配到当前节点,通过容器运行时(Container Runtime)运行应用实例

-

kube proxy 注意到应用实例准备完成(可以接受来自客户端的连接),为它们配置网络和负载均衡等

kubelet 和Controller监控系统保持应用程序运行

三、重要概念

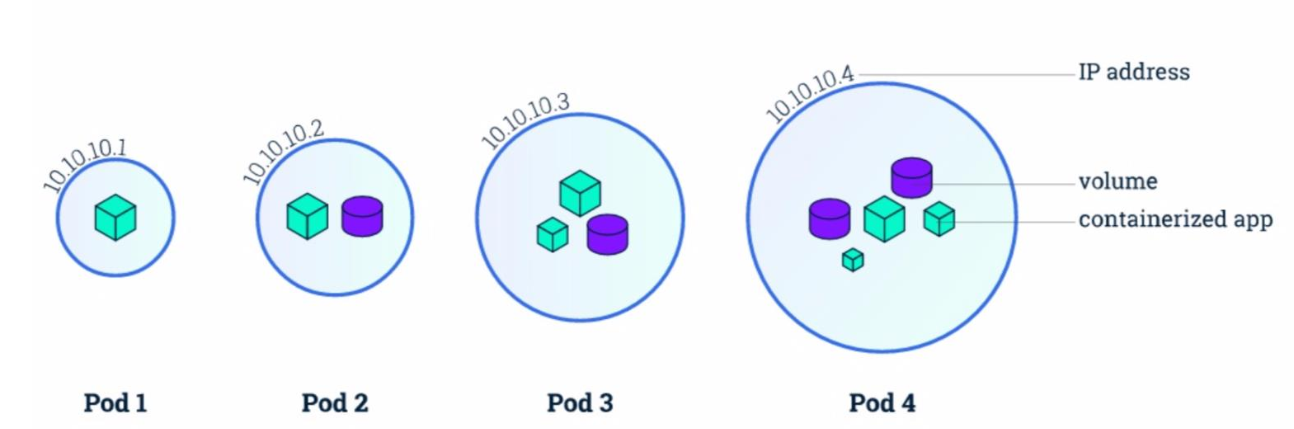

3.1 Pod

在 Kubernetes 中创建和管理的、最小的可部署的计算单元。

Pod是一组容器的集合。

Pod中的内容总是搭配在一起来运行,统一调度,在共享上下文中运行。

在Pod中的容器中,共享网络,存储以及怎样运行容器的一些声明。比如:一个Pod中的容器可以使用localhost来进行互相访问。

共享上下文:包括一组 Linux 命名空间、控制组(cgroup)和可能一些其他的隔离方面, 即用来隔离 Docker 容器的技术。 在 Pod 的上下文中,每个独立的应用可能会进一步实施隔离。

就 Docker 概念的术语而言,Pod 类似于共享命名空间和文件系统卷的一组 Docker 容器。

Pod示例:

apiVersion: v1

kind: Pod

metadata:

name: nginx

namespace: dev

spec:

containers:

- name: nginx

image: nginx:1.14.2

ports:

- containerPort: 80

-

apiVersion记录 k8s 的 API Server 版本 -

kind记录该 yaml 的对象,比如这是一份 Pod 的 yaml 配置文件,那么值内容就是Pod -

metadata记录了 Pod 自身的元数据,比如这个 Pod 的名字、这个 Pod 属于哪个 namespace -

spec记录了 Pod 内部所有的资源的详细信息containers记录了 Pod 内的容器信息name容器名image容器的镜像地址resources容器需要的 CPU、内存、GPU 等资源command容器的入口命令args容器的入口参数volumeMounts容器要挂载的 Pod 数据卷ports端口

volumes记录了 Pod 内的数据卷信息

Kubernetes 集群中的 Pod 主要有两种用法:

- 运行单个容器的 Pod。"每个 Pod 一个容器" 模型是最常见的 Kubernetes 用例; 在这种情况下,可以将 Pod 看作单个容器的包装器,并且 Kubernetes 直接管理 Pod,而不是容器。

- 运行多个协同工作的容器的 Pod。 Pod 可能封装由多个紧密耦合且需要共享资源的共处容器组成的应用程序。

每个 Pod 都旨在运行给定应用程序的单个实例。如果希望横向扩展应用程序 (例如,运行多个实例以提供更多的资源),则应该使用多个 Pod,每个实例使用一个 Pod。 在 Kubernetes 中,这通常被称为副本(Replication)。

注意:Pod不是进程,只是提供了容器的运行环境,Pod一旦被创建,就会在其节点上运行,直到结束或者被销毁

在Pod中的容器也分为多种类型:

-

初始化容器(Init Container):

Init容器会在启动应用容器之前运行并完成

-

应用容器(App Container):

在初始化容器启动完毕后才开始启动,我们一般部署的容器

-

边车容器 (Sidecar Container):

与Pod中的应用容器一起运行的容器,Sidecar模式,在不更改主容器的基础上,增强其功能

-

临时容器(Ephemeral Container):

临时容器是使用 API 中的一种特殊的

ephemeralcontainers处理器进行创建的,实现了调试容器附加到主进程的功能,然后你可以用于调试任何类型的问题,在做故障排查时很有用

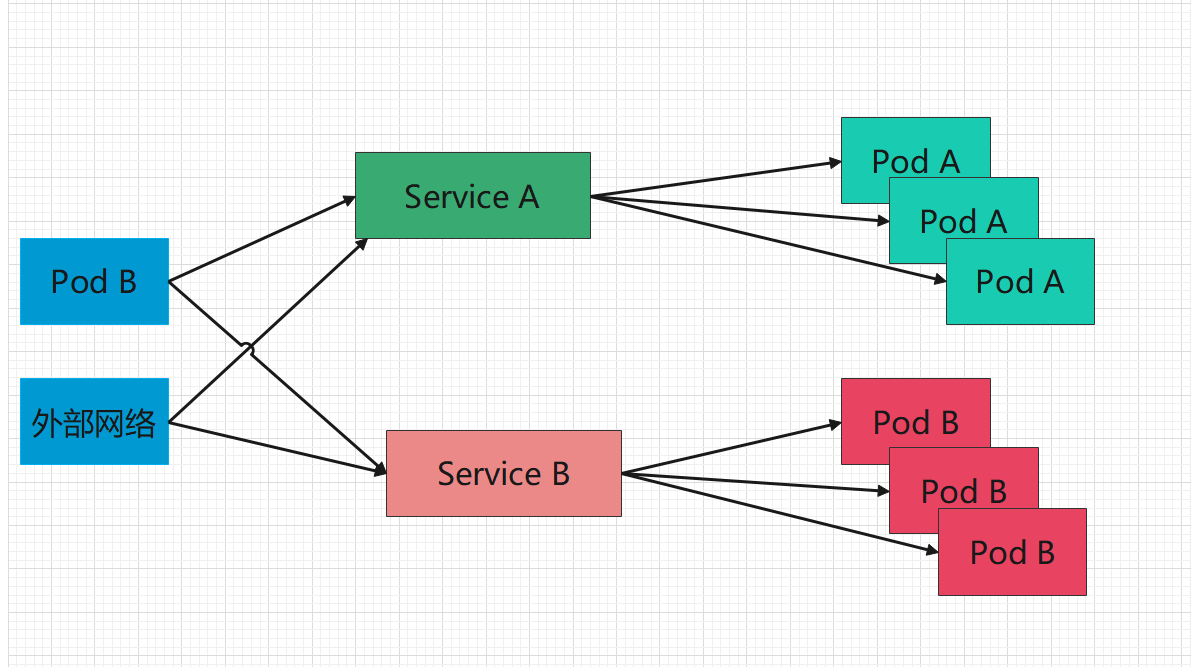

3.2 Service

官方定义:

将运行在一组 Pods 上的应用程序公开为网络服务的抽象方法。

使用 Kubernetes,您无需修改应用程序即可使用不熟悉的服务发现机制。Kubernetes 为 Pods 提供自己的 IP 地址,并为一组 Pod 提供相同的 DNS 名, 并且可以在它们之间进行负载均衡。

假定有一组 Pod,它们对外暴露了 9376 端口,同时还被打上 app=MyApp 标签

apiVersion: v1

kind: Service

metadata:

name: my-service

namespace: dev

spec:

selector:

app: MyApp

ports:

- protocol: TCP

port: 80

targetPort: 9376

上述配置创建一个名称为 "my-service" 的 Service 对象,它会将请求代理到使用 TCP 端口 9376,并且具有标签 "app=MyApp" 的 Pod 上。

Kubernetes 为该服务分配一个 IP 地址(有时称为 “集群 IP”),该 IP 地址由服务代理使用。

四、安装

采用三台机器,一台机器为Master(控制面板组件)两台机器为Node(工作节点)

机器的准备有两种方式:

- VMware虚拟机 centos7操作系统 三台

- 云厂商 租用服务器 按量付费 费用极低 用完销毁即可

4.1 前置

设置主机名

# 每个节点分别设置对应主机名

hostnamectl set-hostname master

hostnamectl set-hostname node1

hostnamectl set-hostname node2

修改hosts

# 所有节点都修改 hosts

vim /etc/hosts

192.168.200.104 master

192.168.200.105 node1

192.168.200.106 node2

关闭防火墙

# 所有节点关闭 SELinux

setenforce 0

sed -i --follow-symlinks 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/sysconfig/selinux

# 所有节点确保防火墙关闭

systemctl stop firewalld

systemctl disable firewalld

关闭swap分区

# 临时关闭

swapoff -a

# 永久关闭

vim /etc/fstab

#注释下面这行,或使用 sed 命令注释

sed -ri 's/.*swap.*/#&/' /etc/fstab

#/dev/mapper/centos-swap swap swap defaults 0 0

# 查看是否关闭成功 若都显示 0 则表示关闭成功,需要重启服务器生效,可以使用 reboot 或 shutdown -r now 命令重启

free -m

同步网络时间

# 如果没有 ntpdate ,使用如下命令安装

# yum install -y ntpdate

ntpdate ntp1.aliyun.com # 使用

date

添加安装源

#所有节点

# 添加 k8s 安装源

cat <<EOF > kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

mv kubernetes.repo /etc/yum.repos.d/

# 添加 Docker 安装源

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

如果报错 -bash: yum-config-manager: command not found 则运行 yum install -y yum-utils

如果安装 yum-utils 报错 failure: repodata/repomd.xml from kubernetes: [Errno 256] No more mirrors to try.,则设置 repo_gpgcheck=0

4.2 安装所需组件

- Kubectl: Kubectl 管理 Kubernetes 集群命令行工具

- kubeadm:Kubeadm 是一个快捷搭建kubernetes(k8s)的安装工具,它提供了kubeadm init 以及kubeadm join这两个命令来快速创建kubernetes集群

- kubelet:kubelet 是在每个 Node 节点上运行的主要 “节点代理”。

# 所有节点

yum install -y kubelet-1.23.9 kubectl-1.23.9 kubeadm-1.23.9 docker-ce

4.3 启动

#所有节点

systemctl enable docker

systemctl start docker

systemctl enable kubelet

systemctl start kubelet

4.4 修改docker配置

# kubernetes 官方推荐 docker 等使用 systemd 作为 cgroupdriver,否则 kubelet 启动不了

cat <<EOF > daemon.json

{

"exec-opts": ["native.cgroupdriver=systemd"],

"registry-mirrors": ["https://tfm2bi1b.mirror.aliyuncs.com"]

}

EOF

mv daemon.json /etc/docker/

# 重启生效

systemctl daemon-reload

systemctl restart docker

4.5 kubeadm 初始化集群

#仅在主节点运行

# 失败了可以用 kubeadm reset 重置

#关闭交互分区

swapoff -a

#初始化集群控制台 Control plane

# apiserver-advertise-address: master 节点 IP

# image-repository:镜像仓库地址

# kubernetes-version: 版本号

# pod-network-cidr 和 service-cidr 不清楚如何设置,使用该默认值

# 查看其他默认值可使用命令: kubeadm config print init-defaults > kubeadm.yaml 查看默认初始化文件

kubeadm init \

--apiserver-advertise-address=192.168.200.101 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version=v1.23.9 \

--pod-network-cidr=10.244.0.0/16 \

--service-cidr=10.96.0.0/16

# 记得把 kubeadm join xxx 保存起来

# 忘记了重新获取:kubeadm token create --print-join-command

# 复制授权文件,以便 kubectl 可以有权限访问集群

# 如果你其他节点需要访问集群,需要从主节点复制这个文件过去其他节点

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

# 在其他机器上创建 ~/.kube/config 文件也能通过 kubectl 访问到集群

kubeadm join 192.168.200.101:6443 --token wou8ux.tpfiunjbgrjqy8vz --discovery-token-ca-cert-hash sha256:f1ae65b2e88427a44cd0883df9739bb13e3bb122227b7a12c4717d68c317cdc8

如果token过期,可以在master 主节点执行 kubeadm token create --print-join-command --ttl=0 ,token 永不过期,获取到新的join命令

4.6 把工作节点加入集群

swapoff -a

kubeadm join 192.168.200.101:6443 --token wou8ux.tpfiunjbgrjqy8vz --discovery-token-ca-cert-hash sha256:f1ae65b2e88427a44cd0883df9739bb13e3bb122227b7a12c4717d68c317cdc8

4.6.1 安装网络插件

kube-flannel.yml

---

kind: Namespace

apiVersion: v1

metadata:

name: kube-flannel

labels:

pod-security.kubernetes.io/enforce: privileged

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-flannel

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-flannel

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-flannel

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-flannel

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

#image: flannelcni/flannel-cni-plugin:v1.1.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel-cni-plugin:v1.1.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

#image: flannelcni/flannel:v0.19.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

#image: flannelcni/flannel:v0.19.0 for ppc64le and mips64le (dockerhub limitations may apply)

image: docker.io/rancher/mirrored-flannelcni-flannel:v0.19.0

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: EVENT_QUEUE_DEPTH

value: "5000"

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

kubectl apply -f kube-flannel.yml

get nodes 查看节点信息

4.6.2 安装 dashboard

github地址:https://github.com/kubernetes/dashboard/,

版本为v1.23 所以安装 2.5.1版本

执行安装:

kubectl apply -f kubernetes-dashboard.yaml

想要访问dashboard服务,就要有访问权限,创建kubernetes-dashboard管理员角色

kubectl apply -f dashboard-adminuser.yaml

kubectl describe secrets -n kubernetes-dashboard admin-user-token | grep token | awk 'NR==3{print $2}'

获取token,如

eyJhbGciOiJSUzI1NiIsImtpZCI6Ik5nUVQzNjhGS0R6MGlWLU82VnFraEdpRWtCajFnQ1hhVWdfc1Fmbjl3NlEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLTZscXd3Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI2NWNmODVkMy0wYzM2LTQ3ZjEtYmRlMi05MDNlMDYxZjJjNTQiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.G9zvUV2X_h5lHmk5YF7evjlA78x8EMEpbdVySOijXgIbToHt8XUw5H5YKOMRgEvQ-hVM__BaPAH5MhtcIQLFD7VSr6sXEU3tbBDaPGVEEA8fl4HZh-lLkcGb1OpGmgdmM3-V7W2iere79kD6JDkpq4NzuKDu_-OLyMl2eyBuKunPICeV0KG75rzfglopIqkZ5U6lYdiG9B8Kyk51RIHq6303E-6iGNoSYVfPoqNtxpX3Ws7qitAX5nDJ9X1DLjBSH7TKjeaBxgm7MOF2BJHvIIVSkTv03aXvJZ96yZdEzUlF7fvMEnF7sSsqYBM8k-W1hQG-6J1-6Mn2JkwCAFf8TA

查看服务端口:

kubectl get pods --all-namespaces

kubectl describe pod kubernetes-dashboard --namespace=kubernetes-dashboard

kubectl get svc -n kubernetes-dashboard

登录:

出问题可清空一个iptables

systemctl stop kubelet

systemctl stop docker

iptables --flush

systemctl start kubelet

systemctl start docker

kubernetes-dashboard.yaml

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 32000

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

nodeName: master

securityContext:

seccompProfile:

type: RuntimeDefault

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.5.1

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

spec:

securityContext:

seccompProfile:

type: RuntimeDefault

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.7

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

dashboard-adminuser

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin-user

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard